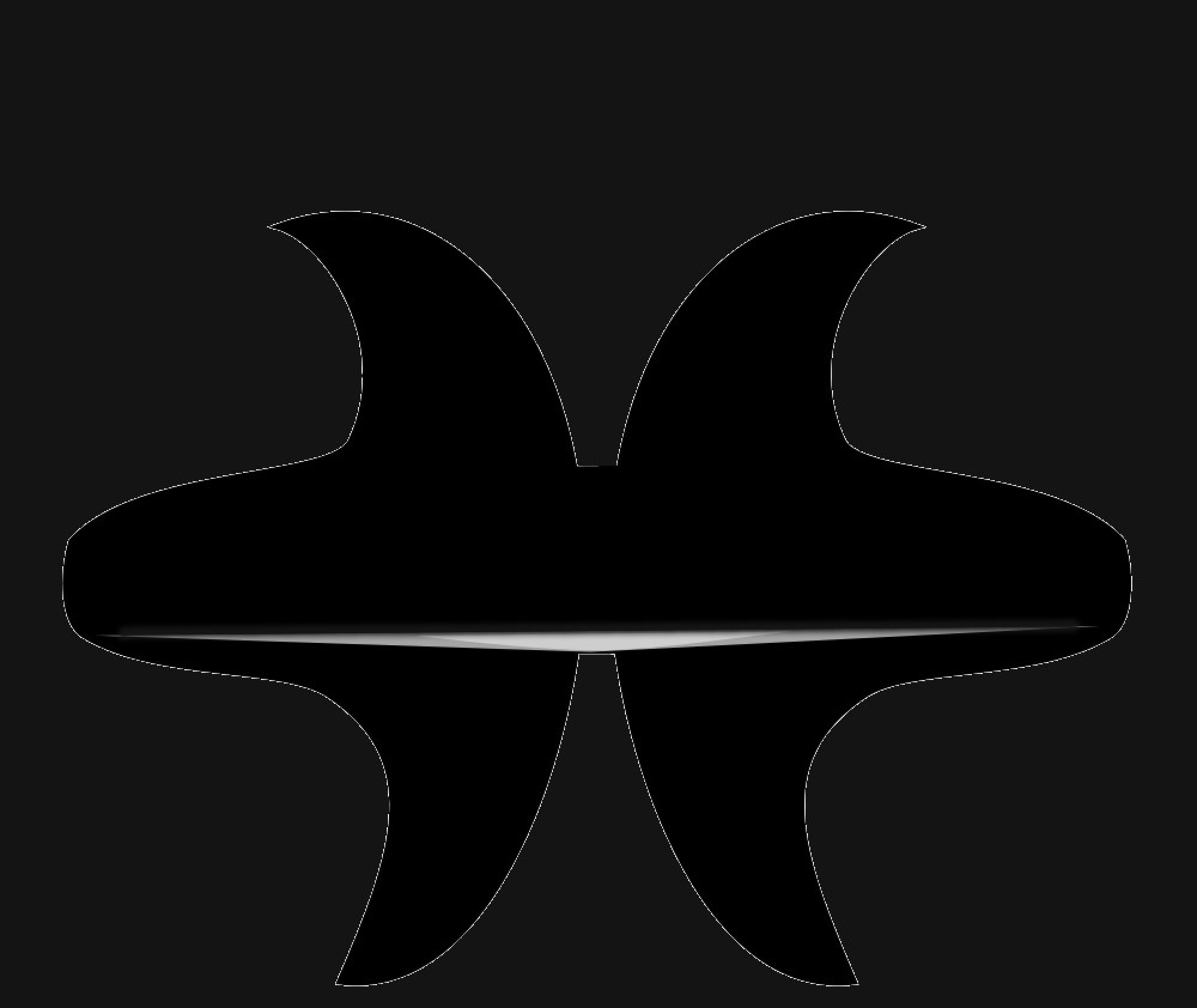

If I were to summarize my work from 2024 in a single graph, then this would be it 🙂

So I finalized the LTP/D algorithm. It’s meant to calculate neuronal response, in terms of cycles to activation, when the number of synapses changes, frequency changes, synapses with different latency or different distances, synapse learning… It took me a whole year and I’m still not sold on it … I tested it on all scenario and the response is consistent but in many ways is not what I expected. What I obtained is murky, not clear cut. Anyway is good enough to use it in a network.

So for 2024 I had a single goal, to have a LTP/D algorithm.. For sure I was hoping for more… much, much more, but in the end I’m happy that I have a clear starting point for 2025..

For 2025 I want to be able to “learn” and reproduce an arbitrary sequence such as: AABAAC … something like that.. I abandoned for now the vision side of the network… Turns out that is much more complicated than I imagined, because the cell from the eye (horizontal, bipolar and amacrine cells) do very complex changes on the input.. So for now I will work with non variable input… so for neuron N(x,y), there is a single input, say A.. or white… This may seem simple, but it’s not simple at all for my system.. It is easy enough to record MOUSE, working in parallel, but if I want to record HAPPY, because I have two “P”, repeating letters, then I need to use a sequence rather than working in parallel. But how to work with a sequence is totally unknown to me..