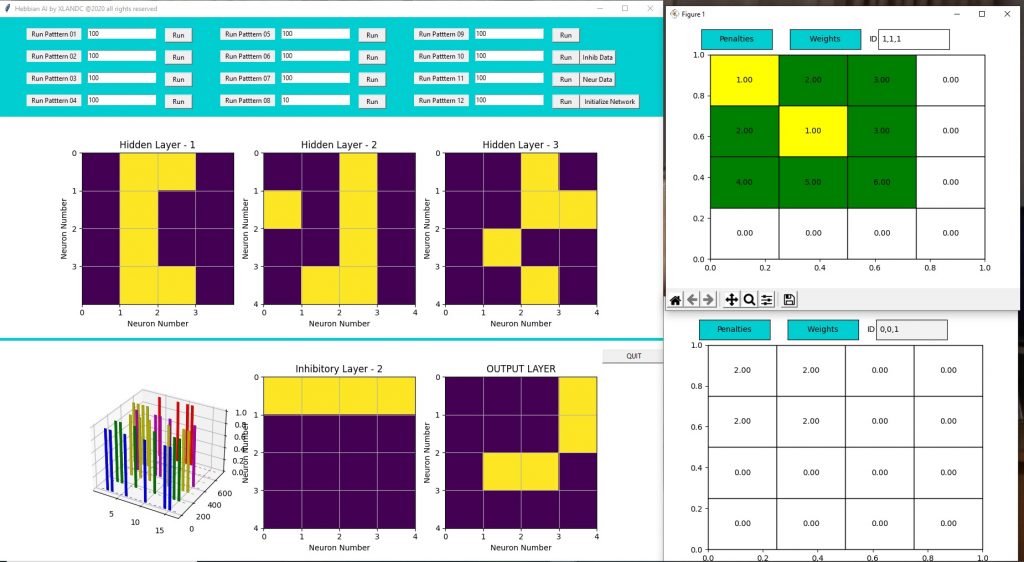

While I was focusing on fixing the synchronization issue, I lost sight of another serious issue. Once I introduced the semantics of dendrites, I lost the learning mechanism.. Not only that but I also lost the inhibition mechanism.. Inhibition could have been fixed somehow, but I realized that without the current mechanism of inhibition is not possible to synchronize neuronal activity. Maybe I need 2 inhibitions to get back to the previous state.

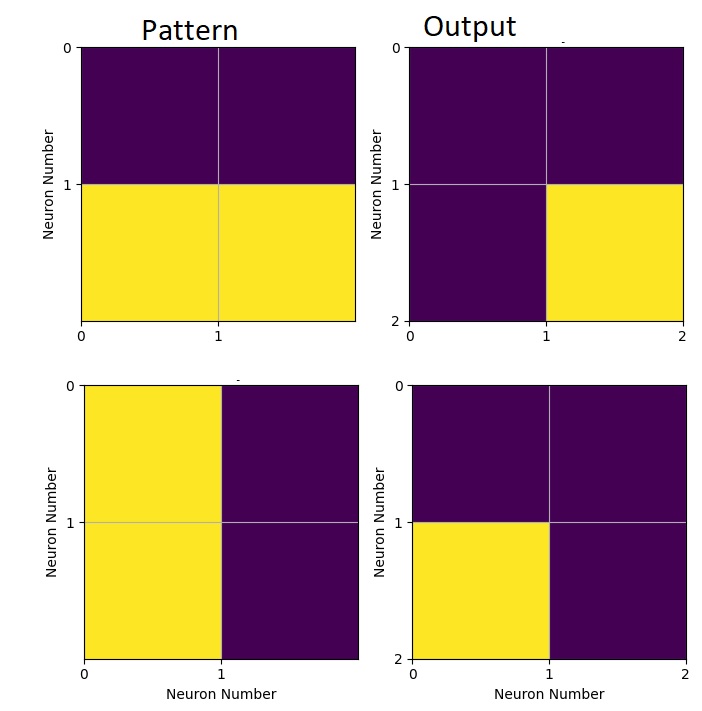

Anyway when synchronization was somehow fixed, I realized that there was no learning anymore. Of course I did not think through the changes I introduced with the semantics of dendrites… I read more neuroscience articles watched some online lectures.. got disappointed on the lack of clarity but eventually I came up with a new theory for neuronal learning inspired by what I learned. I went and ran multiple simulation scenarios on an Excel sheets and it seems to work.

The new theory is unfortunately much more complicated, meaning many things could go wrong but it has some clear advantages and is much more in line with what’s known (or assumed) in biology:

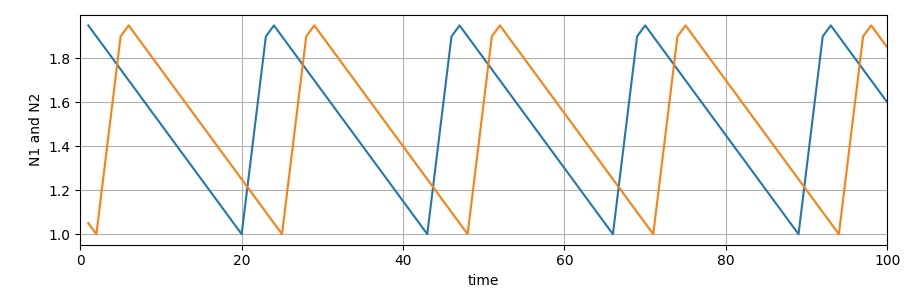

- learning now integrates firing rates (which I despise because it makes understanding more difficult)

- multiple synapses on multiple dendrites can activate now to generate an activation potential.

- there is a new mechanism for “dendritic growth”, which is to say that I now have a rule, based on activity, for when a dendrite can accept connections. The model does not tell me when to seek new connections though..

The drawback ? Firing rates… I still use the concept as defined in my previous post so I’m not using time but cycles to calculate a firing rate. I’m still hoping that I won’t have to use actual time for firing rates. Also there are still many unknowns, LTD is not so clear anymore, so I may still end up with yet another failure. In terms of coding, it should not be difficult to implement, but it will take some time to understand if something is “right” or “wrong”.